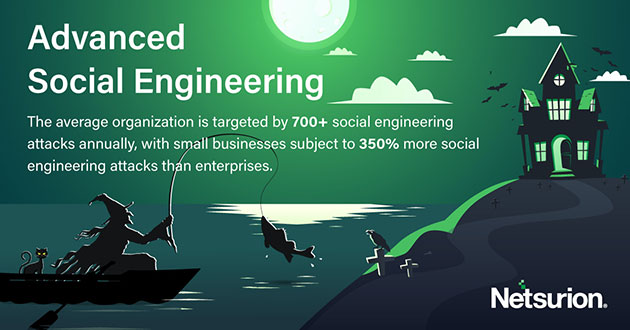

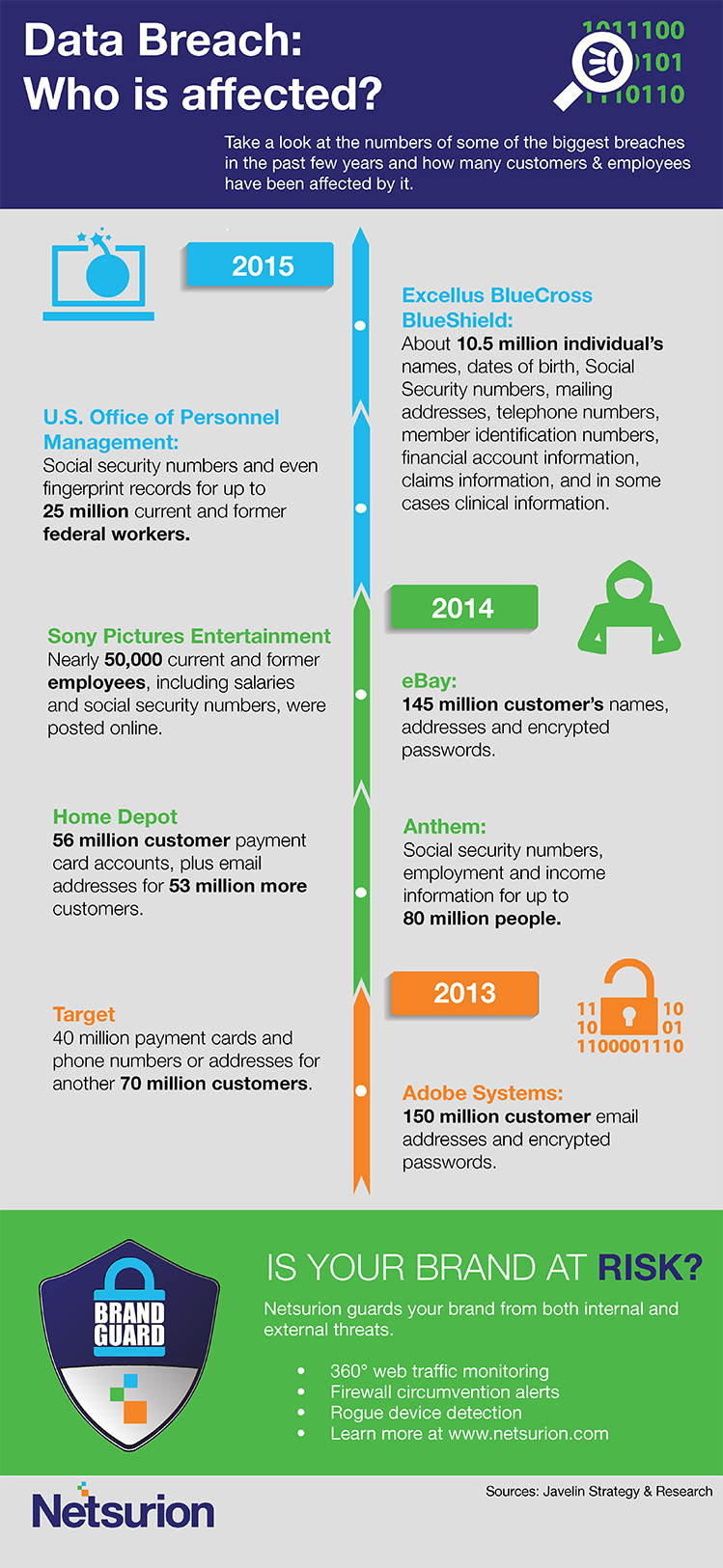

What do Microsoft, Okta, T-Mobile, Nvidia, and LG all have in common? Well, for starters, they have all been extorted by one of the most prolific and unpredictable hacking groups of 2022.

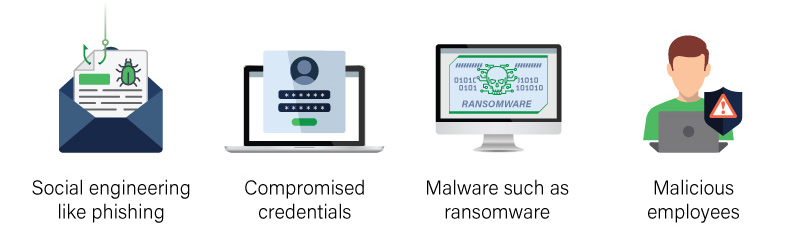

The group coined, LAPSUS$, remarkably infiltrated and extorted a handful of the largest, pre-imminent tech giants in the world through a unique approach of SIM-swapping, social engineering, malware, and other means to enact their financially-driven motives, such as threatening the public release of proprietary data or simply dumping private data on their digital channels for all to see which certainly separates them from other “successful” hackers and groups of the last several years, not to mention they may all be between 16-21 yrs. old.

Let’s take a deep dive into the psyche of LAPSUS$ and what exactly makes them so dangerous, yet so bewildering.

Who Is Truly Behind LAPSUS$?

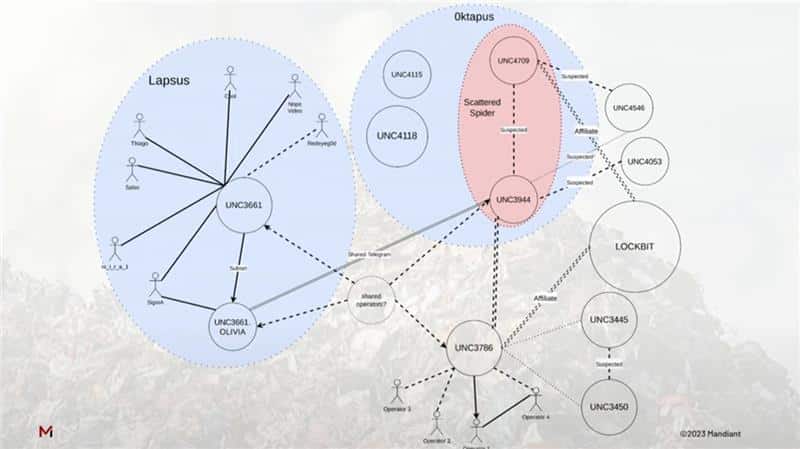

Uncovering the leader or “brains of an operation” can culminate in immense understanding and ultimately dismantling of a criminal organization, but unfortunately, this cohort seems to work in a decentralized manner, closer to chaos than order. Some of the infamies certainly arise from their childish antics, leading to assumptions of inexperience.

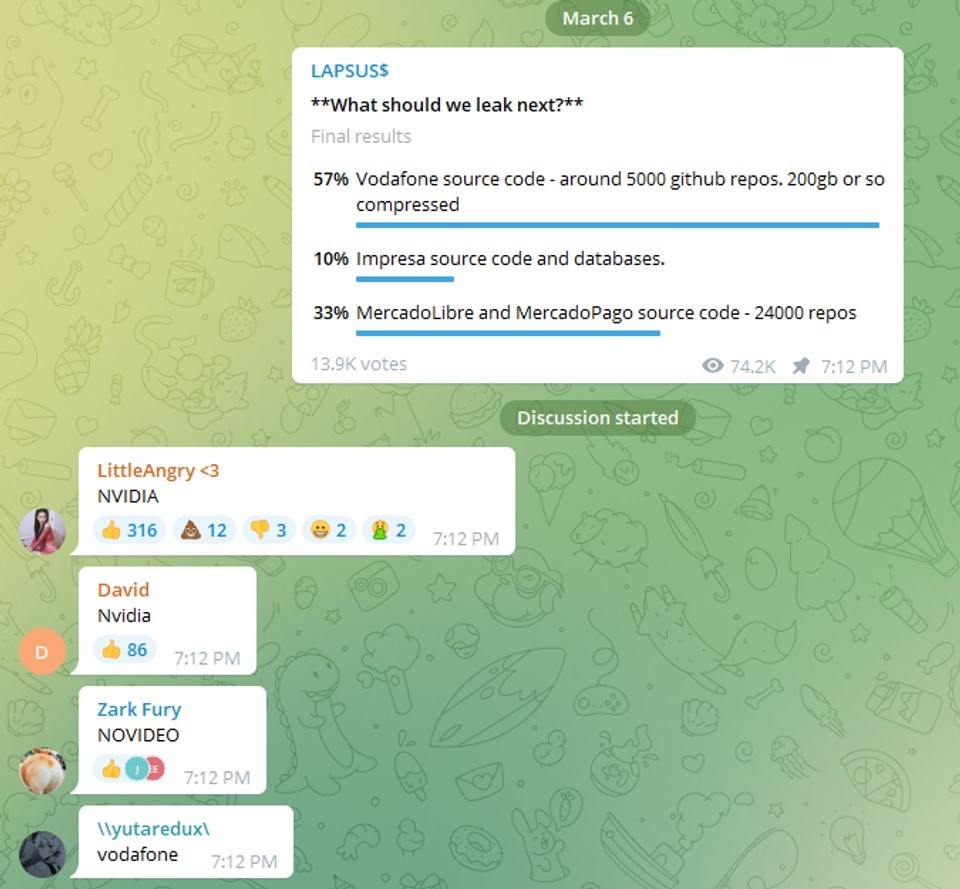

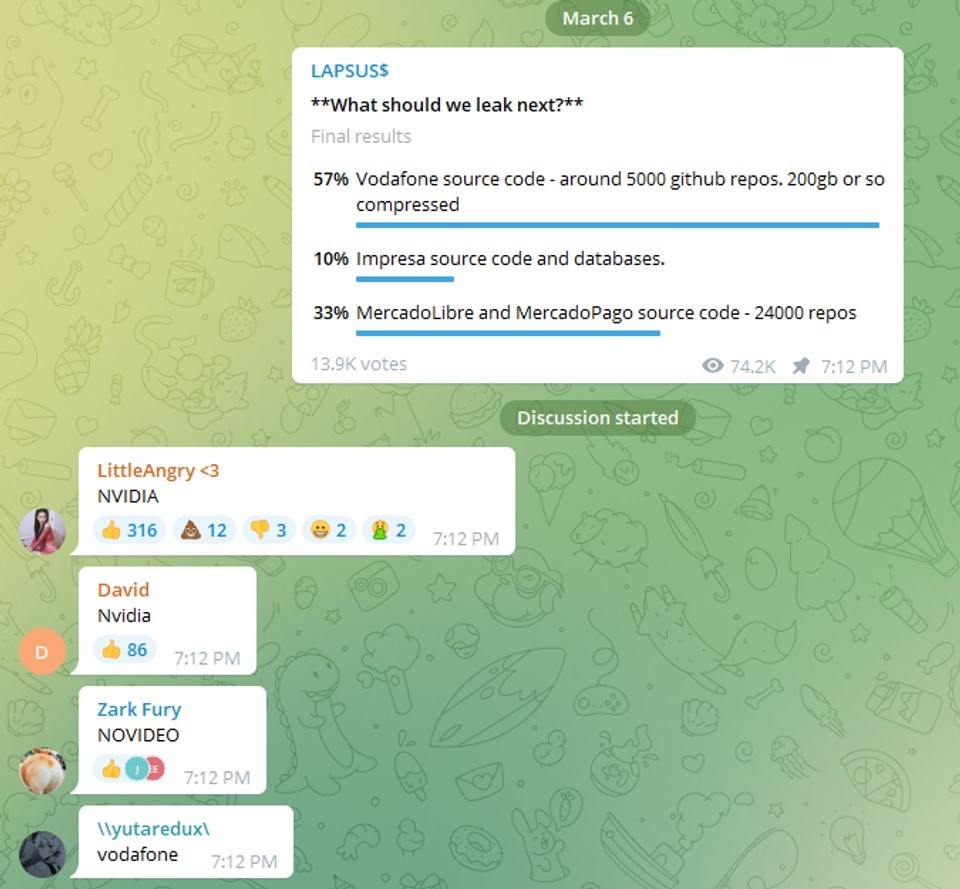

The list of high-profile attacks would be enough for most cyber criminals to “hang it up” and relinquish to the dark recesses of the internet to preserve earnings and evade detection, but LAPSUS$ touts these victories via a public “Telegram” channel as well as polling viewers on their next “hit”. The social community seems to be the bread and butter of this group, alluding even further into their adolescent composition.

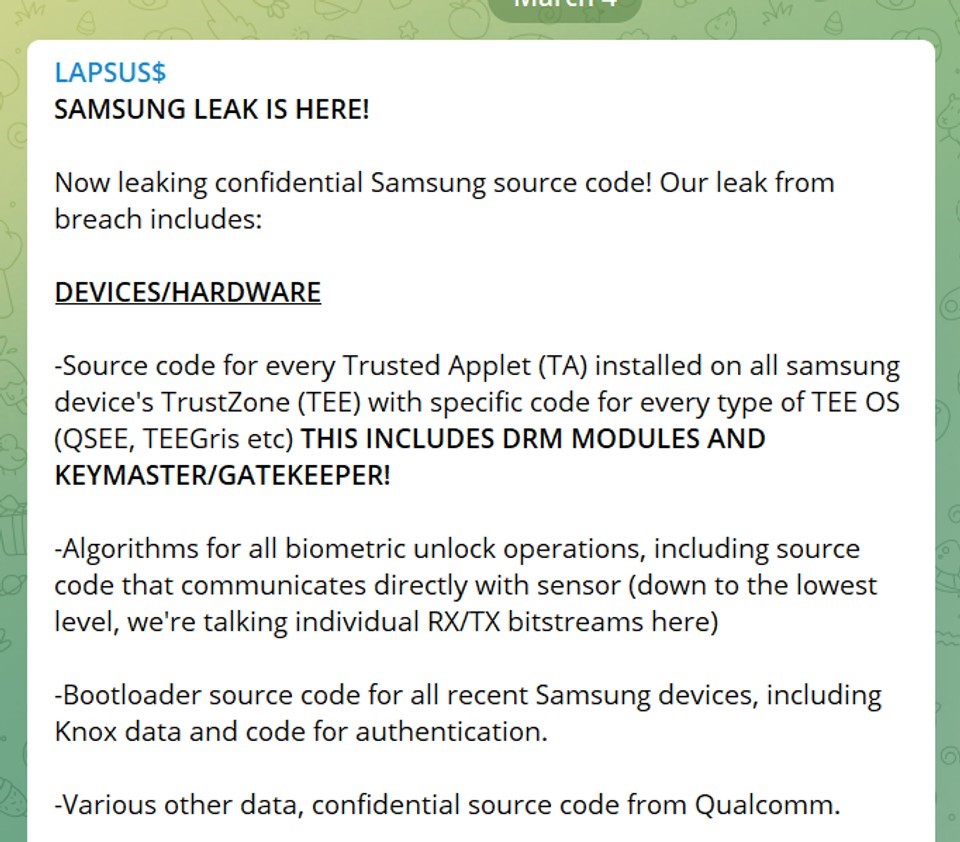

The LAPSUS$ group hit headlines in December of 2021, with a barrage of attacks against South American companies, including Brazil’s Ministry of Health and other government agencies in the area, before expanding their scopes onto larger, multinational companies to truly catapult into the limelight. At this point, the group had the full attention of the cybersecurity community and didn’t intend to squander it.

Fame and fortune stood around the corner as the group shifted to the pillars of international tech giants as their next prey, hoping to utilize their immense influence and coverage to the group’s advantage.

As stated via their Telegram channel, LAPSUS$ negates any state or political motives for their extortion attacks, leading some to question the seemingly randomized actions of the group. Is there a collective goal beyond notoriety and wealth?

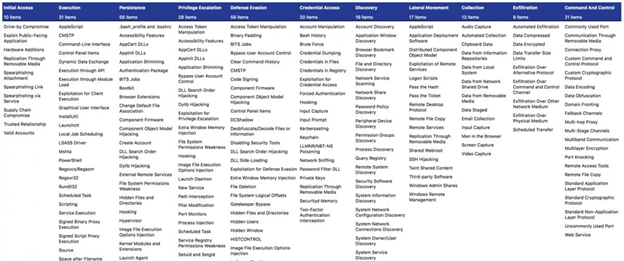

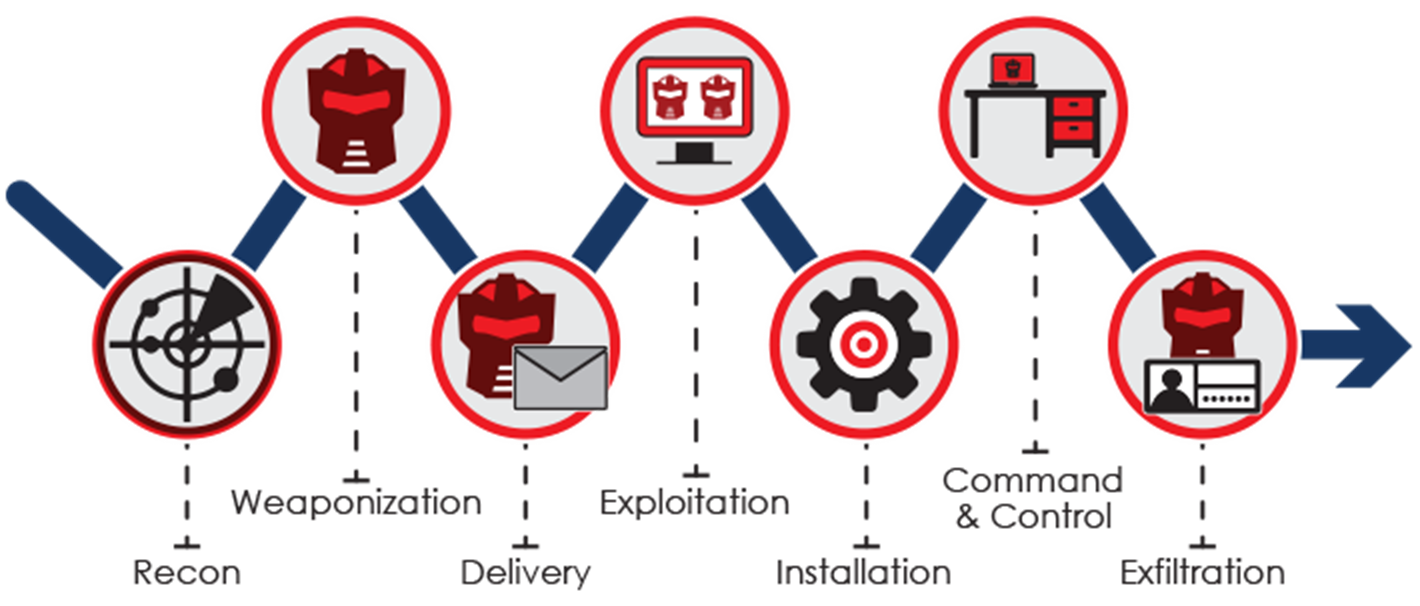

How Does LAPSUS$ Operate?

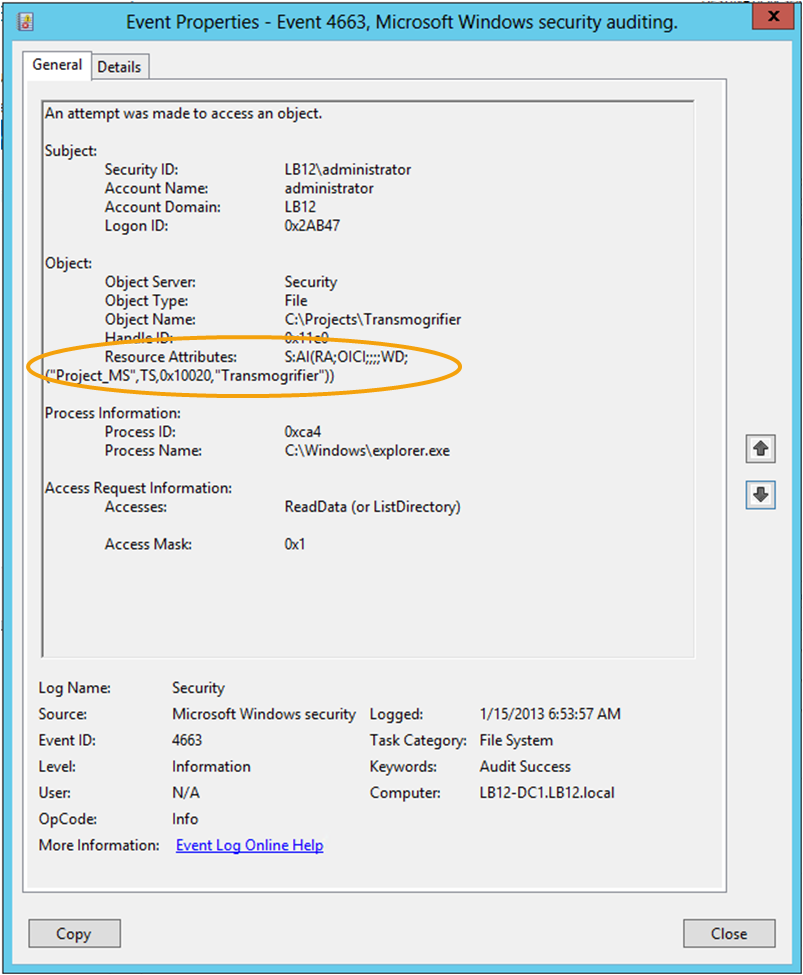

Microsoft released a ground-breaking report in March of 2022, outlining LAPSUS$’s operational inner workings with speculation on how they were able to extort the largest tech giants in the world. The report did not divulge the members of the group, but rather their model of pure destruction and social engineering methodologies used to extract data from even the most secure of systems.

While the group may be comprised of juvenile counterparts, Microsoft repeatedly spoke on the intricate, elaborate, and downright cunning methods used, similar to the most mature threat actors.

Let’s take a look at their strategies.

Telegram Channel

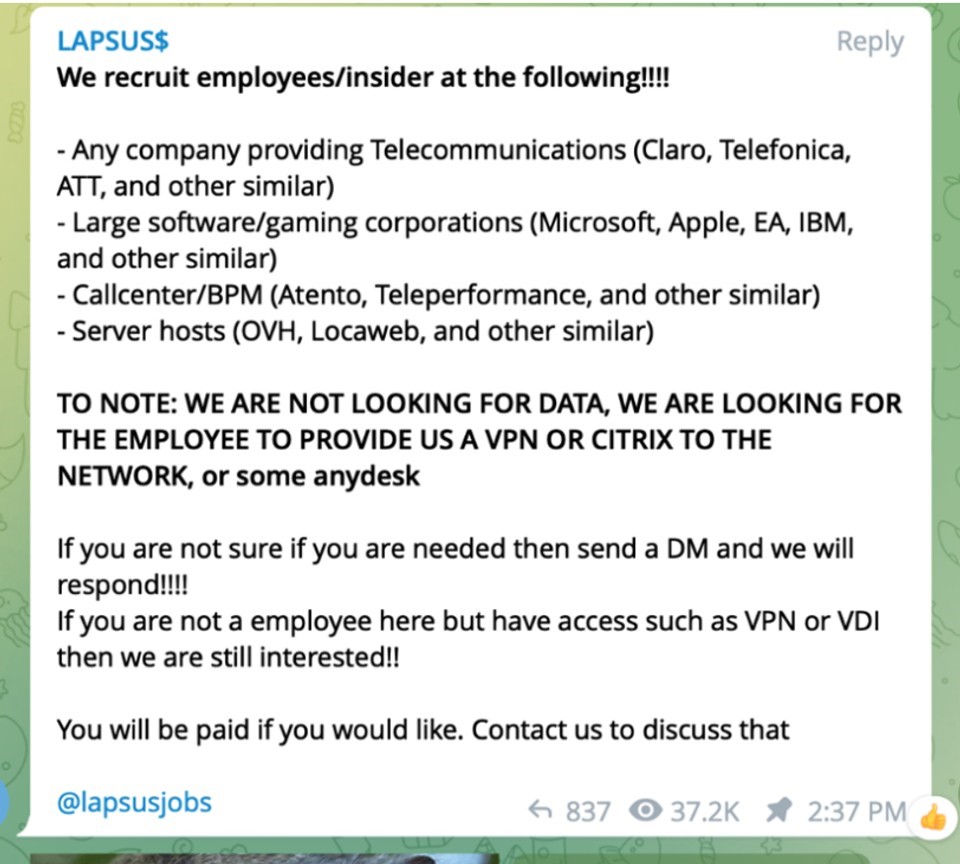

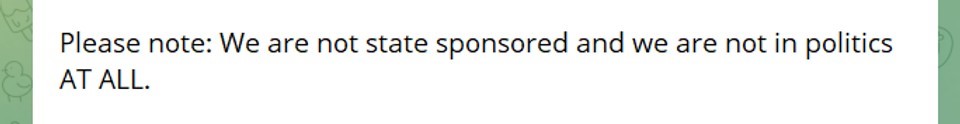

LAPSUS$ proved time and time again, that they are to be taken seriously regardless of the make-up of their group, forcing C-suite cybersecurity executives to take notice swiftly. Microsoft stated they tend to gain seemingly impossible access via “social engineering” involving the bribing of employees at targeted locations within customer support call centers and/or various help desks.

Microsoft wrote, “Microsoft found instances where the group successfully gained access to target organizations through recruited employees (or employees of their suppliers or business partners)”

LAPSUS$ recruits “insiders” via social media channels since the beginning of their attacks, using nicknames such as “Oklaqq” and “WhiteDoxbin” to name a couple. These recruitment posts offered upwards of $20k/week to informants employed within companies like AT&T, Verizon, and T-Mobile. Their message was simple, just get us in the door and we will do the rest.

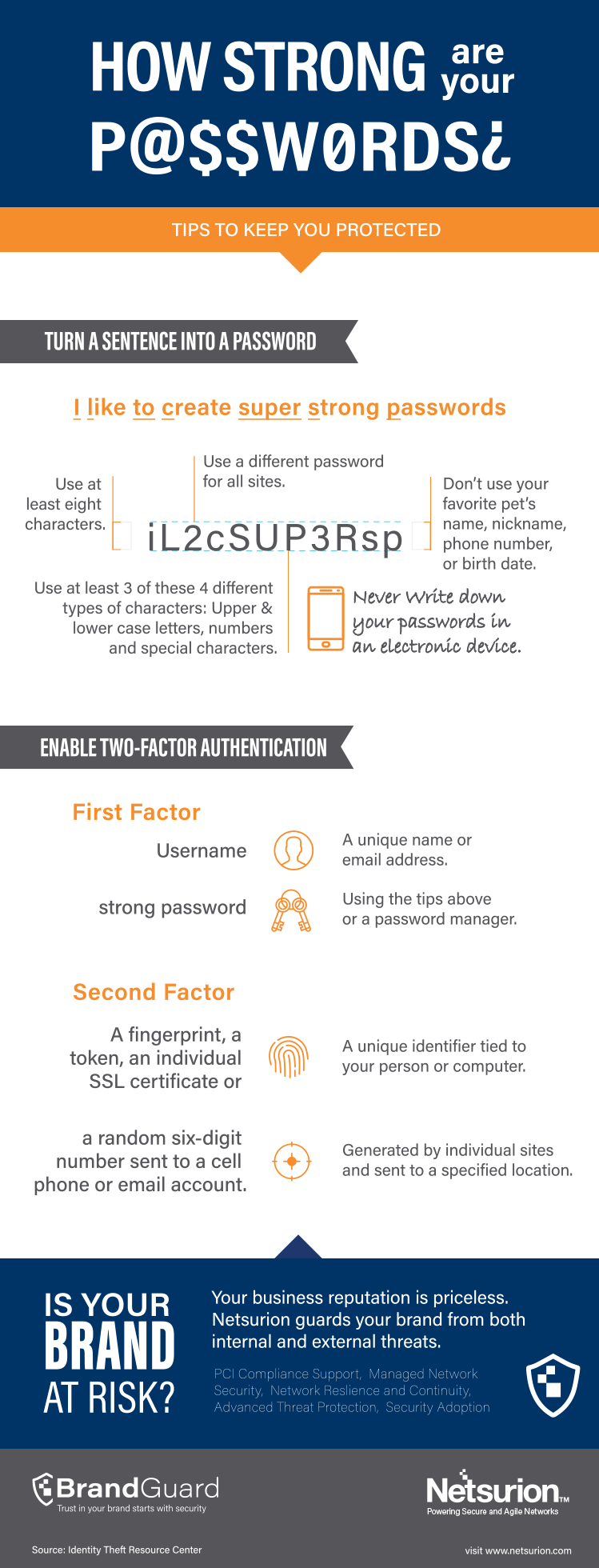

SIM-Swapping Method

SIM swapping is most simply described as transferring one’s mobile phone number (the target) to another device owned by the hacker. This opens the doors for attackers to receive those unique one-time codes & passwords for easy access to protected systems, while potentially gaining the ability to reset passwords for total control.

“Their tactics include phone-based social engineering; SIM-swapping to facilitate account takeover; accessing personal email accounts of employees at target organizations; paying employees, suppliers, or business partners of target organizations for access to credentials and multifactor authentication (MFA) approval; and intruding in the ongoing crisis-communication calls of their targets,” Microsoft explained.

Unit 221B, an advanced cybersecurity consultancy from New York, shadows cybercriminals performing SIM-swapping as well as keeping tabs on members of LAPSUS$ before their group ever formed (and still does). The group’s techniques, while wildly effective, are not unique, as this form of SIM-swapping has been heavily focused on within major phone companies for many years. Allison Nixon, Chief Research Officer of Unit 221B, exclaimed, “LAPSUS$ may be the first to make it extremely obvious to the rest of the world that there are a lot of soft targets that are not telcos,” Nixon said. “The world is full of targets that are not used to being targeted this way.”

The group also employs a malicious malware program called, “RedLine Stealer” or simply “RedLine”, which can be found on hacker forums for purchase and is commonly used for theft of information and infection of entire systems. Logins, passcodes, autofill data, and even stored payment info can be uncovered and extracted to access a plethora of personal accounts such as:

- Social Media

- Email

- Banking

- Crypto wallets, and more

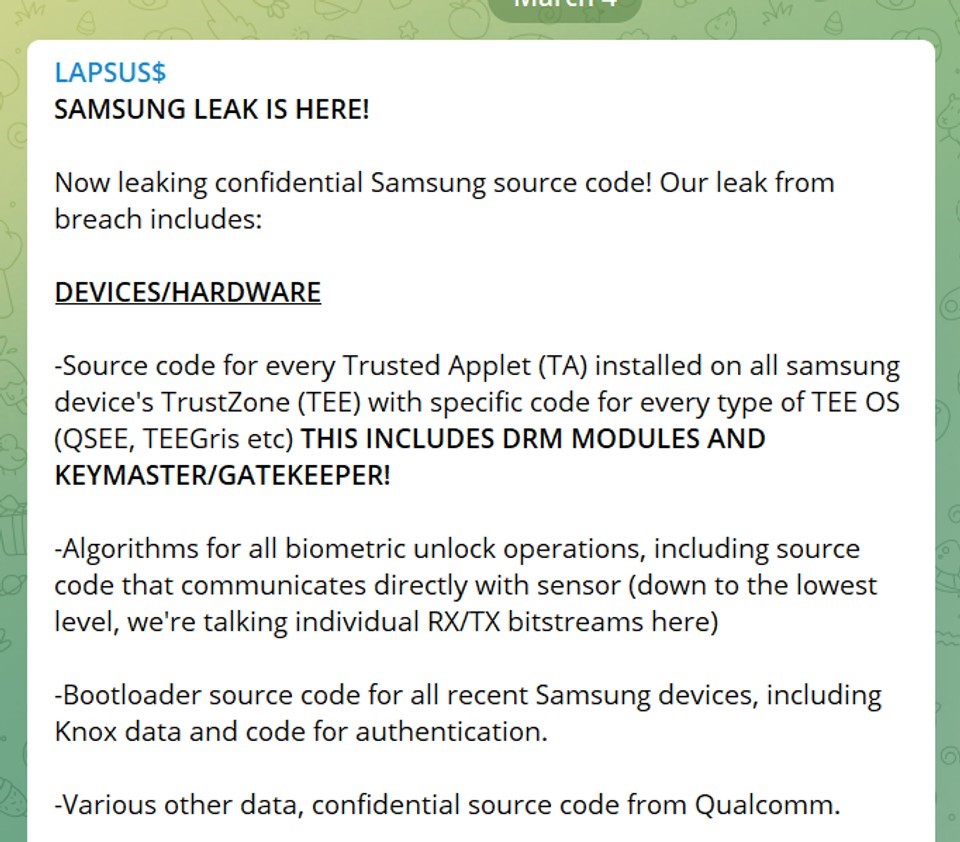

RedLine Stealer was once again put into action by LAPSUS$ against Electronic Arts (EA), threatening to reveal 780 GB of proprietary source code, unless a hefty payment was received. The hackers revealed they gained control of EA’s data via authentication cookies purchased from the dark web in a marketplace called, Genesis.

“The existence of this leak was initially disclosed on June 10, when the hackers posted a thread on an underground hacking forum claiming to have EA data, which they were willing to sell for $28 million. The hackers said they used the authentication cookies to mimic an already-logged-in EA employee’s account and access EA’s Slack channel and then trick an EA IT support staffer into granting them access to the company’s internal network,” wrote Catalin Cimpanu for The Record.

Social Engineering & Corporate Extortion

Social engineering attacks work by stealing credentials that allow for data theft and other debilitating means via psychological manipulation of individuals to release critical data to attackers. Microsoft stated LAPSUS$ received “intimate knowledge” of various companies through these tactics allowing them unbelievable front-door access to systems.

LAPSUS$ was known to frequently dial help desks, sometimes bribing or tricking employees into resetting critical account information, and then learning how they handled these security invasions by listening in on comm channels like Teams and Slack. The group used this “training session” to truly understand the methods and protocol these organizations went through to deter the very attack the group planned to carry out. This insider knowledge allowed them to circumnavigate all security points and remain hidden within the system while formulating further plots for extortion.

Microsoft released a statement, “The group used the previously gathered information (for example, profile pictures) and had a native-English-sounding caller speak with the help desk personnel to enhance their social engineering lure. Observed actions have included DEV-0537 answering common recovery prompts such as “first street you lived on” or “mother’s maiden name” to convince help desk personnel of authenticity.”

Initial access is granted through various methods, such as using RedLine, searching public code repositories, and even purchasing remote access credentials via the dark web. Of course, more straightforward approaches such as directly paying employees for access also proved worthwhile.

Multi-factor authentication seems like a safeguard with minuscule security lapse but LAPSUS$ manages to override these systems through session token replay and even repeatedly spamming account holders with MFA prompts after they successfully gained the password. The hacker group stated in their Telegram chatroom, that upon targeting users during the middle of the night with MFA prompts, their success rates were much higher, since people tend to simply select, “Accept” rather than be interrupted during their precious hours.

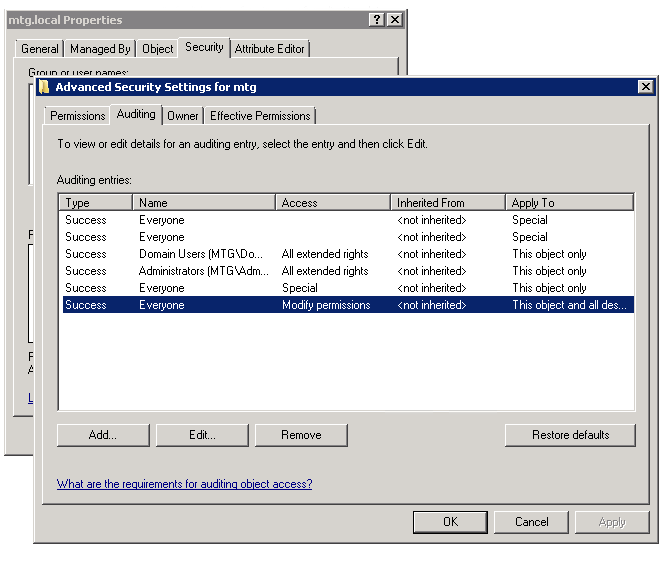

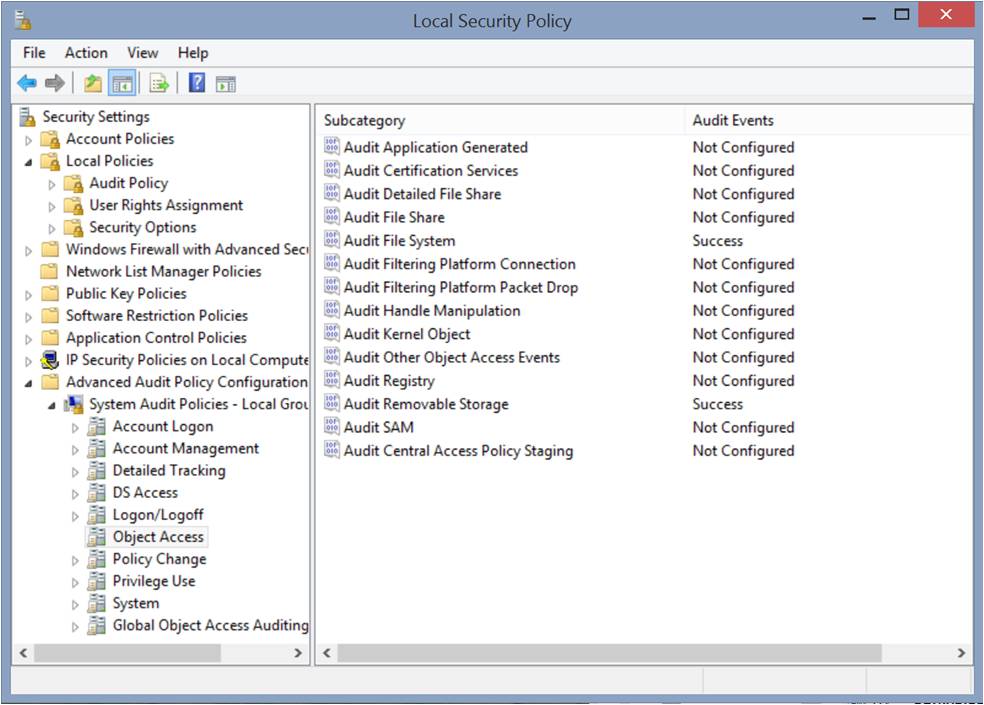

Data Harvesting and VPNs

Virtual private networks, also known as VPNs, are another key on the keychain of LAPSUS$, which utilized them in a way that prevented any “impossible travel alerts” from being triggered within the system. These alerts, connected to cloud monitoring services, detect any suspicious activity of all users and logins, making notes on any consecutive login attempts from let’s say Colorado and then another from New York, 5 minutes apart. Hence, the “impossible” nature since one person could not possibly access a system quickly from these locations. Bypassing this feature is a critical step to remain hidden long enough to exfiltrate target data.

Microsoft reported, “DEV-0537 has been observed leveraging access to cloud assets to create new virtual machines within the target’s cloud environment, which they use as actor-controlled infrastructure to perform further attacks across the target organization.”

Once inside, LAPSUS$ had the power to knock out the business from its cloud platform, giving it absolute control. Next, all inbound and outbound email was to be directed to its infrastructure where data would be harvested before the total deletion of systems. Finally, the group would either publicly unveil the stolen data or use their many extortion tactics to prevent data release.

All good things must come to an end…right?

Arrests

Bloomberg releases a breaking report stating the entire operation is being driven by a 16-year-old teenager from Oxfordshire, UK with seven arrests following on March 24th.

The 16 yr. old boy's father told the BBC: "I had never heard about any of this until recently. He's never talked about any hacking, but he is very good at computers and spends a lot of time on the computer. I always thought he was playing games."

All arrested were immediately released, pending a deeper investigation, with confirmation coming on April 2nd that two individuals were charged with connection to LAPSUS$ and the attacks of numerous tech giants.

Offenses included:

3x counts of unauthorized access w/ intent to impair the operation of or hinder access to a computer.

2x counts of fraud by false representation

1x count of causing a computer to perform a function to secure unauthorized access to a program

Both individuals are reportedly on bail with limited details available due to their status as minors.

As a cybercriminal group, letting your voice be heard can be an opportunity for increased notoriety, but also lead to increased investigation and scrutiny by law enforcement agencies around the world. The above arrests of a 16 & 17 yr. old came just days after the public unveiling of source code for mobile apps belonging to these companies on March 30th:

- Facebook

- DHL

- Abbot

- AB InBev, and others

LAPSUS$’s Payday

You might be wondering, with the insane prestige included on their “hit list”, how lined is the group’s wallet? Well, speculation has risen that LAPSUS$ has amassed upwards of $160 Million in revenue.

This finding is not concrete and has yet to be confirmed by members of LAPSUS$, but their public Telegram channel released details on their crypto wallet containing (3,790.62159317 bitcoin).

Where Are They Now?

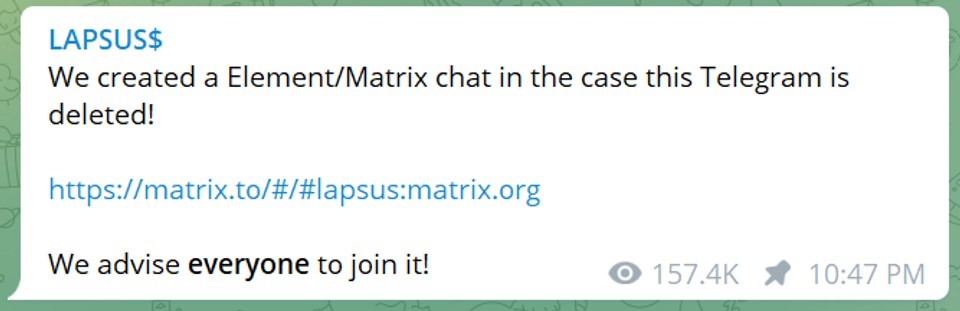

LAPSUS$ announced via its Telegram channel, “We created a Element/Matrix chat in the case this Telegram is deleted!

We advise everyone to join it!”

This is the last known message of the group, leading to speculation of potential regrouping while finding new paths of attacks after cutting it close with law enforcement. All of these intrusions mentioned in the blog occurred during a 3-month span, so potentially the master plan was to strike hard, and strike fast while jumping out before everything came crashing down.

Let us know what you think, have we heard the last of LAPSUS$, or is this just the dawn of a new era of cybercrime?

Attack Timeline

![img build buy partner[3] img build buy partner[3]](https://www.netsurion.com/assets/content/uploads/img-build-buy-partner3.png)

![img cybersecurity maturity model ops[1]](https://www.netsurion.com/assets/content/uploads/img-cybersecurity-maturity-model-ops1-1-1024x451.jpg)